Academic Research Enhancement Award (AREA, R15) grants support small-scale research projects in the biomedical and behavioral sciences conducted by faculty and students at educational institutions that have not been major recipients of NIH research grant funds. Recently, a faculty member at an AREA grant-eligible institution wrote to NIGMS Director Jon Lorsch urging the Institute to support more AREA grants, arguing that these grants not only train students but are also cost-effective. This prompted us to take a close look at our portfolio of R15 grants. I’d like to share what we found. Thanks to Tony Moore and Ching-Yi Shieh for providing data in the figures.

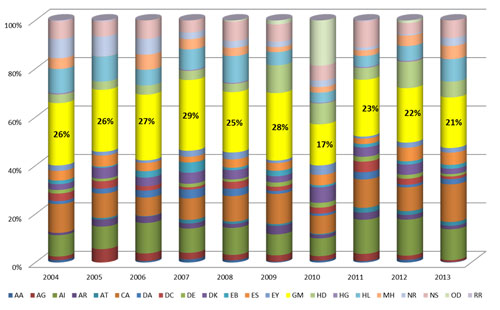

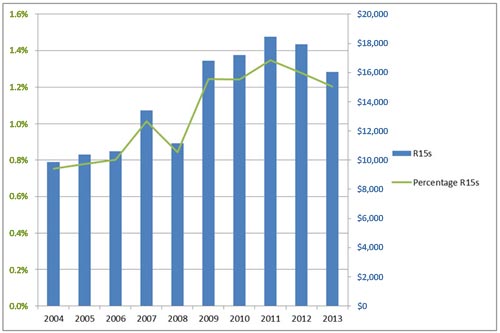

NIGMS receives the largest number of R15 applications of any NIH institute. This is not surprising, since faculty and students at eligible institutions typically focus on basic research using model organisms and systems. Table 1 shows that the number of AREA grants awarded by NIGMS in each of the last 10 fiscal years has varied from a high of 63 in Fiscal Year 2007 to a low of 36 in Fiscal Year 2010 and that total funding for these grants has ranged from $8.9 million to $18.4 million. As shown in the first figure, NIGMS funds more R15s than any other institute, in recent years between 21% and 29% of the NIH total.

| Fiscal Year | Number of Applications | Number of Awards | Total Funding ($ in thousands) |

|---|---|---|---|

| 2004 | 128 | 48 | $9,867 |

| 2005 | 142 | 49 | $10,382 |

| 2006 | 171 | 50 | $10,602 |

| 2007 | 200 | 63 | $13,387 |

| 2008 | 167 | 53 | $11,158 |

| 2009 | 172 | 42 | $8,903 |

| 2010 | 199 | 36 | $9,766 |

| 2011 | 313 | 62 | $18,441 |

| 2012 | 306 | 56 | $17,925 |

| 2013 | 304 | 45 | $16,035 |

Table 1. Number of R15 applications received and awarded by NIGMS and the total funding for R15s in Fiscal Years 2004-2013.

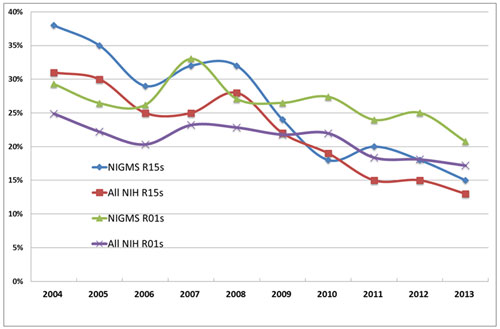

The NIGMS success rate for R15s tends to be higher than the overall NIH success rate, although both have been declining steadily over the past 10 years (Figure 2). This decline is due to several factors: an increase in the number of applications, a bump-up in the size of AREA grants in Fiscal Year 2010 from $150,000 to $300,000 in direct costs, and a flat NIH budget. Figure 2 also shows that success rates for R01 grants have been falling as well. While success rates for both NIGMS and NIH R15 grants had usually been higher than those for R01s, in the last several years they have been lower.

As you can see in Figure 3, over the 10-year period, the funds spent on R15 grants have fluctuated and have made up between about 0.7% and 1.3% of NIGMS’ budget for research project grants (which are largely R01s). Across NIH, AREA grants account for an even smaller amount, about 0.5% last year compared with 1.2% for NIGMS.

Does our investment in AREA grants pay off? There are a number of ways to estimate their impact, including quantitative measures such as the number of publications that result from the project, as well as outcomes that are more difficult to measure such as encouraging students to pursue careers in biomedical research and enhancing the educational environment.

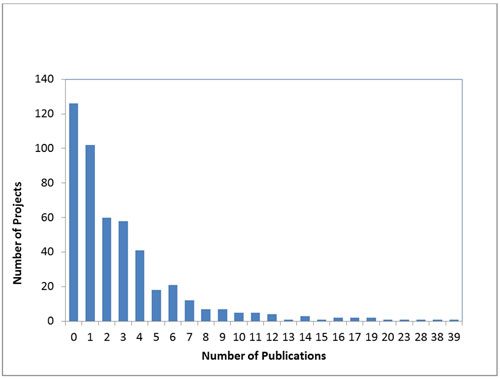

While the number of publications per grant is far from a perfect indicator of research productivity, we found that the number of publications attributed to any AREA grant over its entire duration varies tremendously, as shown in Figure 4. Nearly three-fourths of all AREA grantees publish at least one paper, and some produce many publications over the lifetime of their awards. Considering that AREA grantees often have heavy teaching loads and employ undergraduates rather than graduate students and postdocs to assist with the research, these numbers are encouraging.

We hope you will share your AREA grant success stories with us.

I agree that the AREA program is worthy of increased support.

Unfortunately, it is hard to compare this analysis directly to the analysis Jeremy Berg posted on R01 publication rates back in Sept 2010. That post reported the average publications resulting from R01s but parsed it as a function of annual direct costs. The number of pubs varied from 5 to 8 for a range that started at $150k/yr and went up to 1.4M/yr. AREA grants have lower direct costs than any of those bins. However, the publication rate for AREA grants does not appear substantially lower. That is impressive and suggests that AREA grants are very cost effective. Using an admittedly imperfect metric.

And I think that AREA grants have a higher impact on training and mentoring of young scientists than do R01s. As an AREA recipient I have seen this impact first hand. I have been able to support many students that may otherwise not have stuck with a career in science. Some of those have continued on to very successful graduate school careers.

The change in eligibility requirements in 2010 was a major change to the program. I would be curious to know how many more institutions have been applying for AREA grants since that change. I suspect that the increase was large. I suspect that before the change, the majority of applications came from non-PhD granting departments and I wonder if that has changed. While both types of institutions merit support, I worry that the non-PhD granting institutions will end up losing out under this new policy.

I see many good reasons to consider increasing NIGMS support for AREA grants in hopes of keeping the success rate from sliding further below the R01 rate.

Some of my thoughts on why it is no longer a constructive use of my time to apply to the R15 program are summarized at my web site below. The change in eligibility in 2010 dramatically increased the number of submissions.

I am from a non-PhD granting institute, and I would like to point out that, if you want to include publications as one of the assessment measures of AREA grants, the time window should extend several years beyond the expiration date of the award. In my laboratory, the most important papers are published after the first author has graduated and sometimes as long as 3 years after the grant expired! This delay is not the result of poor student productivity or poor organizational skills on my part but, rather, a reflection of the many jobs I must juggle on a daily basis (in addition to managing research) and the fact that any given student may be in the lab for only 1-2 years before they graduate.

The data reflect all publications through 2013 from a particular grant regardless of how long it took to publish them after the grant ended.

For anyone who has been promoting the value of this mechanism for the largely undergraduate institutions, for example, in the IDeA states, there is absolutely nothing new in these trends. The question is, what is the NIH doing about it. If they truly value the program, and understand its very important role in research training, and development of the next generation of biomedical research scientists, then they need to increase the amount awarded to this program. As this is a trans NIH program, the NIGMS needs to be leading the charge to get the problem fixed. Let’s go already!

During my career as a NIH funded professor at the University of Pennsylvania, I frequently used & published with my undergraduate students, I used specific strategies to accomplish their involvement in the research project, mentoring their education & career, wether the career became a scientist or clinician. I was actively ridiculed for my involvement with undergraduate students as “they were a waste of time”. However, a thoughtful selection of students with consideration of their personal goals, values, & commitment to themselves & the project was essential to what I consider my overwhelming success.

What does those abbreviation mean in Fig 1, AA, AI,, ? institution?

Since NIGMS does not have paylines, what is a fundable score? Is a score of 30 good and what is the likelihood of funding?

You are correct that NIGMS does not have paylines. Within the budget that we allocate each year to AREA grants, NIGMS staff consider the score as well as other factors, such as portfolio balance and other programmatic priorities. Investigators should discuss the likelihood of funding with their program directors.