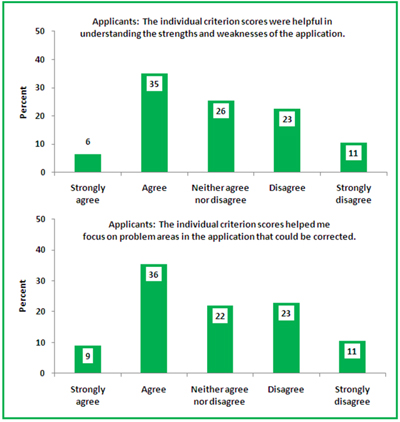

One of the key principles of the NIH Enhancing Peer Review efforts was a commitment to a continuous review of peer review. In that spirit, NIH conducted a broad survey of grant applicants, reviewers, advisory council members and NIH program and review officers to examine the perceived value of many of the changes that were made. The results of this survey are now available. The report analyzes responses from these groups on topics including the nine-point scoring scale, criterion scores, consistency, bulleted critiques, enhanced review criteria and the clustering of new investigator and clinical research applications.

Please feel free to comment here or on Rock Talk, where NIH Deputy Director for Extramural Research Sally Rockey recently mentioned the release of this survey.

I am underwhelmed by the reviewer and applicant responses to the new review system. I have sat on both sides of the table and was not surveyed. I will say that I think it very bad that applications submissions were limited to two, especially during the initial phase of all these changes. I believe strongly that an important function of peer review is to improve the science that our country funds and we should not try to remove that essential function. How about this: you can submit a proposal as many times as you like AS LONG AS THE SCORE KEEPS Improving. This accomplishes two goals; 1) limiting resubmissions, same score twice, proposal is out, while 2) allowing iterative improvement of important projects, and 3) puts this decision about whether this science is important back into the hands of the peer reviewers, where it belongs.

I find it curious that the survey didn’t ask any questions about phasing out A2 applications. This seems to me to be the most important/ significant change that was made. I would very much like to see a study of the impacts of this change – both positive and negative. Anecdotally, it certainly appears to be having many potentially profound negative impacts.

This is tangentially related, but relevant to broader topic of the quality of NIH peer review.

I have been on both sides, and I love the format as a reviewer. However, on the other side, I have found the quality to be inconsistent. Several times I have seen reviewers state that something was missing, but it was there in plain sight, i.e., a full paragraph and/or figure. It would be nice if you could pass this information back in same way that was tracked internally, so that reviewers themselves are held to a certain standard. Now, I realize everyone makes mistakes and is in a rush sometimes, but some internal rating system of reviewers would be nice (maybe its already there). This would sort of be like what baseball does with their umpires.

Thoughts?

Want to enhance peer review?

1. Get rid of the triage, which currently means that nonsense written by reviewers can be largely swept under the carpet.

2. Strengthen the position of PO/PD, by hiring active, experienced investigators for temporary assignments. The PO/PD needs to have the power to stand up during the panel meeting and say “This is BS!”.

The two above points are not novel, this is the NSF system. Additionally, I suggest:

3. Break the culture of tolerance toward substandard reviews/reviewers. Brand such reviews as scientific misconduct. I have been hearing this “improving peer review” mantra for years, and apparently the system still needs improving…

4. The discussion of the scoring system etc. is moot without destroying the current institutional culture within NIH.

Under the current system, NIH is funding grants potentially worth several millions of dollars based on 12 pages of science! This being the case, “THE RULES” for doing this must be more transparent and EVERY part of the process, reviewers and program persons should work as was “the ideal design”.

Now we know that every system has faults, and nothing works like ideally designed, but this new system has too many downsides to count that need addressed.

One place to start would be with substandard reviewers (or good scientists reviewing in areas where they lack competencies), this is certainly not good for this “enhanced review process”. One fix may be to expand the pool of reviewers (in this modern era of communications; conf calls, skype etc, this could be accomplished). Certainly the same “cycling” study section doesn’t have to be the norm.

Other issues that need addressed: Only 2 submissions means that in essence, particularly for a new investigator, the first submission can be triaged and the second can be killed by just one reviewer! This of course is happening in the same study section and no accountability/transparency that we know of is in place to prevent or discourage this.

As commented on before: Who is holding the reviewers accountable to not reading the grants and asking for detailed info when it is in plain sight, or has been referenced in literature? Has anybody asked “exactly how much information is one to put into 12 pages for a RO1 grant” to satisfy a determined skeptical reviewer! DO the reviewers get docked for this, by ANY rating system or the PO/PD? This along with the narrowed page limitation has made the situation even more difficult for a new investigator.

In theory the “enhanced review” was suppose to help ALL, in practice it only helps the system (PO/PD and professional reviewers) sweep things under the rug (as has been said before ) and only hurts the investigator and particularly the new investigator.

For this system to work (without any changes), ALL parts of the process have to work 100% (which we know for various reasons isn’t going to happen all the time). The program officers need to push to have inconsistencies observed in the individual category scores and comments given for that category to be consistent and based on science not personal opinions biases or politics. (How is it possible that 2 reviewers give high score and one reviewer gives low and the PO or study section rubber stamps this).

Keeping the same reviewers cycling through the study sections again and again (which is almost like professional reviewers) with the same biases and thought process is diminishing the whole process. There has to be some way to fix this in-breading of thoughts and biases and use of the same reviewers. It is diminishing the science not to mention breeding mediocrity.

The new system as implemented in reality has exacerbated the old problems in the system and ssing “spin” and semantics and calling this system “enhanced” is not a solution.