In an earlier post, I presented an analysis of the relationship between the average significance criterion scores provided independently by individual reviewers and the overall impact scores determined at the end of the study section discussion for a sample of 360 NIGMS R01 grant applications reviewed during the October 2009 Council round. Based on the interest in this analysis reflected here and on other blogs, including DrugMonkey and Medical Writing, Editing & Grantsmanship, I want to provide some additional aspects of this analysis.

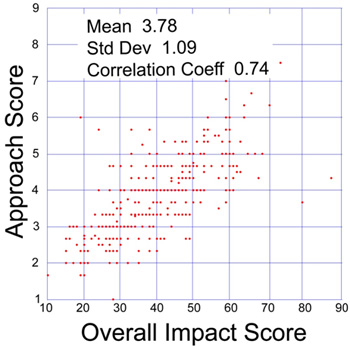

As I noted in the recent post, the criterion score most strongly correlated (0.74) with the overall impact score is approach. Here is a plot showing this correlation:

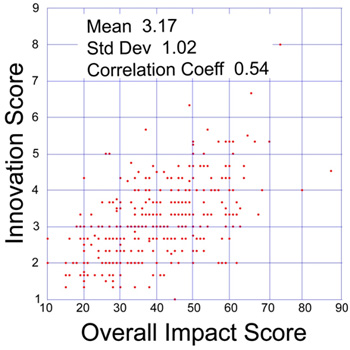

Similarly, here is a plot comparing the average innovation criterion score and the overall impact score:

Note that the overall impact score is NOT derived by combining the individual criterion scores. This policy is based on several considerations, including:

- The effect of the individual criterion scores on the overall impact score is expected to depend on the nature of the project. For example, an application directed toward developing a community resource may not be highly innovative; indeed, a high level of innovation may be undesirable in this context. Nonetheless, such a project may receive a high overall impact score if the approach and significance are strong.

- The overall impact score is refined over the course of a study section discussion, whereas the individual criterion scores are not.

That being said, it is still possible to derive the average behavior of the study sections involved in reviewing these applications from their scores. The correlation coefficient for the linear combination of individual criterion scores with weighting factors optimized (approximately related to the correlation coefficients between the individual criterion scores and the overall impact factor) is 0.78.

The availability of individual criterion scores provides useful data for analyzing study section behavior. In addition, these criterion scores are important parameters that can assist program staff in making funding recommendations.

My understanding as a reviewer has been that if one’s assessment of a particular criterion is altered in the course of the discussion of a grant, that one ought to revise during the edit phase that criterion’s score and bullet points as necessary to reflect one’s final assessment. I have done this on occasion as a reviewer. Although I have also received reviews where–based on the summary of discussion and final impact score–it is abundantly clear that a reviewer has had her assessment of a particular criterion altered during the discussion, yet has not edited her criterion score or bullet points.

Could you run a full regression and account for the Overall Impact based on all the component scores? Of course, Approach and Innovation are likely to be correlated, and it would be interesting to examine all correlations in detail. I also wonder how many independent variables could account for the scores?

PT: We have posted a full regression analysis of this data set.

This is very useful information for us early stage investigators. You are doing us a great service by posting this important information. I am curious, for impact scores of 3, there is a huge spread in overall score. If you take only grants with an impact score and do a correlation for all the other criteria vs. overall score, which one plays the largest role in determining the overall score? I would imagine it is approach but would love to actually see the data.

There are approximately 100 applications with overall impact scores better than 30. For this subset of applications, the correlation coefficients between overall impact score and the criterion scores are as follows: Significance, 0.29; Approach, 0.39; Innovation, 0.26; Investigator, 0.36; and Environment, 0.28.

So is this telling us that the full data set correlations are more informative about what makes a bad grant bad then about what makes a good grant good?

Or is this just a range restriction issue?